Agile metrics – the good, the bad and the ugly

Agile teams often look at and discuss metrics. Some of these metrics are interesting and important, some of them are of questionable value, and some should only be used with caution. This article will attempt to clear this up.

The use and misuse of metrics

Before going into the various metrics that agile teams use, it is first important to understand the context and purpose of using metrics. Agile metrics should be used by a team to perform introspection on their own performance or to make calculations to feed into their own release planning. And you know what this means?

It means if people outside the team, such as middle managers, want to start inspecting metrics, that is a warning sign. Metrics should not be used to compare one team against another team, because the teams are operating in different contexts and building different things.

Be wary of anyone who wants to use metrics, especially velocity, to find the “underperforming teams”. If they do, ask them if they have metrics on who the underperforming middle managers are!

Burnup / burndown

This is a simple and useful one, and to be honest it is more a representation than an actual metric. A burnup chart shows the rate at which the team is delivering stories compared to how much work there is left to do to complete the release.

This is a simple and useful one, and to be honest it is more a representation than an actual metric. A burnup chart shows the rate at which the team is delivering stories compared to how much work there is left to do to complete the release.

The burndown chart is basically the same but inverted; it shows how many points remaining are to be delivered in the release as the team completes work. The scrum master and/or project manager and/or product owner will probably want to look at these at some point; that’s fine.

My only caveat is that in order for them to be useful, the entire backlog sometimes needs to be estimated, something that I don’t think is a good idea. I suggest instead that you just use average points for all of the stories you haven’t estimated yet.

So for example, you’ve estimated 20 stories, at an average of 4 points per story. You have 50 more stories in the backlog that you haven’t estimated yet. You can average them out at 4 points each, which means your backlog is roughly 200 points.

Velocity

This is the one that you need to be careful about since it is frequently used by managers to measure “performance” or “efficiency”. Velocity is simply the number of points the team has delivered on average per sprint. Some important caveats:

- You need at least two or three sprints under your belt before you can establish a meaningful average

- You should probably use a rolling average of the last four or five sprints

- You should use it for release planning

- You can maybe use it for sprint planning (but not necessarily)

- And you should not use it for anything else, especially not comparing performances of teams. If you’re not convinced, I wrote in detail about why you shouldn’t use velocity to compare teams.

Story cycle time

Story  cycle time is a good metric and one that is not used enough. It is the average time it takes for a user story to go from Ready for Dev (or whatever your equivalent Kanban state is) to Complete (or whatever your equivalent Kanban state is).

cycle time is a good metric and one that is not used enough. It is the average time it takes for a user story to go from Ready for Dev (or whatever your equivalent Kanban state is) to Complete (or whatever your equivalent Kanban state is).

Different organisations have different definitions of “ready” for development or production, and that’s ok. But try and be consistent within the same organisation. You want your story cycle time to be low, ideally around half of your sprint length.

Remember, a story should be of a size that a team can complete in a sprint. Some will be a bit bigger, some will be smaller. So if your average is half your sprint length, you should be able to complete most of them in a sprint. And that’s a good thing.

Story lead time

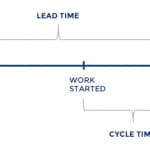

Story lead time is the time from when a story is first created to when it is completed. It encompasses but surpasses cycle time. So story lead time must be equal to or greater than cycle time, always.

Cycle time is more useful, but lead time tells an important story. I wrote an article explaining the differences between them.

Story count

This is a very simple metric but can be quite useful, especially for teams that are using Kanban or Scrum-ban. It is simply the average number of stories a teams delivers per sprint. You might be thinking “but what’s the point of that if stories can be of different size?”, This question has a simple answer: what if stories were all roughly the same size?

If your stories were all the same size, you could use story count instead of velocity. And then you could do away with story level estimation altogether. This is one of the principles of the No Estimates movement.

First-time pass rate

This is the percentage of test cases that pass system (ST) or System Integration Testing (SIT) the first time around. As your teams reach a decent level of agile maturity, these should approach 95 or 100% quickly. If they don’t, you have some problems.